Before starting a new engagement with a client, I always ask a series of questions about their company, their processes, and the project I’ll be working on. One of those questions asks how they’re currently handling user research and product design and I’m often told they “have a tight feedback loop” and that’s all they have to say.

There’s a lot wrong with this answer, but let’s unpack it a little.

Rapid Prototyping

First and foremost, effective use of Rapid Prototyping can be immensely beneficial to a product. If there’s one thing I could convince every company in the world to do, it would be to use some type of rapid prototyping in their product design. If I got a fraction of all of the money spent building products that ultimately failed, I’d never have to work again.

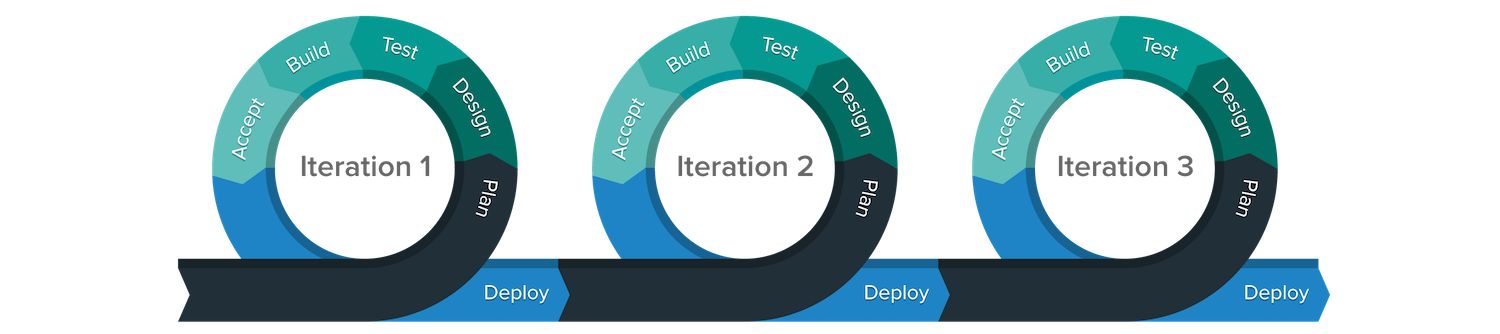

Ultimately, Rapid Prototyping is more a process or practice than a technique or specific tools. Rapid Prototyping involves getting as much feedback as often as possible throughout the design process, and this is accomplished by varying the level of fidelity of what you’re testing (more on this later). By exposing the current version of your product in all of its incomplete glory, you save sinking time into things that don’t work, and ultimately build a better product for your target user.

You can read more about the variety of options when it comes to Rapid Prototyping elsewhere, but here’s a brief overview of some of the options:

- Paper Prototypes - hand-drawn sketches of what an interface might look like

- Wizard of Oz - the practice of playing the role of the computer by abstracting future functionality away

- Testing Your Competitor - before building your solution, see what problems users have with existing solutions

- Interactive Prototypes - semi-functional software systems users can interact with

Let’s Talk About Fidelity

The exact definition of fidelity isn’t exactly clear, but it roughly refers to the level of detail your current iteration includes. It’s often used in the context of prototyping, but it can refer to existing products as well. Your typical startup MVP is a high-fidelity prototype but a low-fidelity product. Fidelity exists on a continuum, with the lower end including concepts, sketches, and rough wireframes, and the higher end being branded/visually design and interactive prototypes. I’ve seen far too many products that jump right into the deep end of fidelity, missing out on all of the potential learning from testing lower fidelity prototypes.

It might be somewhat contradictory to recommend using a lower level of detail, but you have to match your level of fidelity to the type of feedback you’re looking for. Your users will respond to the fidelity they’re given, and their feedback will be colored by this. If you give them paper, they’ll talk about things like workflow, high-level design, and desired features. If you give them highly designed (read: Sketch/Photoshop) images, they’ll talk about things like color scheme, fonts, and whitespace. If you give them an interactive prototype, they’ll talk about things like transitions, interactivity, and performance.

This graph (and more great coverage of the pluses and minuses of different levels of fidelity) from UX Matters.

Why It Matters

It can be intoxicating to set a baseline and watch your numbers improve on whatever metrics you’ve set, as you iterate. Arguably, data-informed design is a good thing, especially compared to the alternative. The problem is when data starts driving your decisions, and you lose sight of the big picture - improving the experience for the end user. Increased conversions, decreased errors, or more efficient interactions may not necessarily be the most enjoyable experience (e.g. a wait time might actually be a good thing).

The problem with this approach is it might be honing in on what’s known as a local maxima. Naive hill-climbing algorithms can fall victim to this problem as well. Essentially, you continue to see improvements and make changes until you reach a point where those improvements either diminish entirely or degrade. At this point, traditional wisdom would be to stop working and either a) work on something else, or b) declare yourself victorious and toast your achievement, all the while missing what could be a better solution.

On a philosophical note, I like to think of every design problem (if not every problem in life) as something like the above graphic, except the plane is infinite – there’re always other options, some of which might be better than our current situation if we’re just willing to take the leap of faith and try a new approach.

Fighting the Local Maxima

So now we’re aware of the problem and what causes it. That doesn’t necessarily tell us what to do about it, though. Here’s a great strategy outlined in an article from 2010:

I couldn’t’ve said it better myself.

A brief plug

Did you find yourself reading this post and thinking “our products have definitely suffered from the wrong type of feedback”? Part of the reason this has come up a lot for me is I’m looking into a product-manager-as-a-service model. You should contact me if you’d like to hear more.

All User Experience posts:

- User Research 101

- Research Playbooks: What Are They and Why You Should Have One

- Building a UX Department from scratch at a 20-year-old Big Data Company

- A Designer's Guide to GDPR

- Improvisation, Creativity, and Design

- The Importance of (Daily) Technique

- An Intro to Embodied Cognition

- Usability Research Checklist

- UX Considerations For IoT

- The Dangers of Tight Iteration

- Product vs Project Management

- Transparency of "Online"

- An Open Letter to OkCupid

- The Importance of Flow

- Note-taking

- The Importance of Content

- DITW: Stovetop